Data is only useful when it can be trusted. Most organizations have no shortage of data. The problem is that what they have is duplicate-ridden, inconsistently formatted, incompletely populated, or quietly wrong in ways that only surface when a process depends on it. According to Gartner, poor data quality costs the average enterprise $12.9 million per year. This guide covers what data quality management means in practice, how to measure and improve it, and what a structured program looks like from the ground up.

In this blog, we’ll cover data quality issues, data quality management, how organizations can make the most of their data, and some real-world examples in various industries.

Let’s get started.

Key takeaways:

- Data quality management is the ongoing process of ensuring data is accurate, complete, consistent, and reliable enough to serve its intended purpose across every system that uses it.

- Poor data quality costs the average enterprise $12.9 million per year, according to Gartner. The financial impact shows up in operational rework, failed analytics, and compliance exposure.

- Data quality is measured across six dimensions: accuracy, completeness, consistency, timeliness, uniqueness, and validity.

- Sustainable data quality requires a structured program covering data owners, measurement, process, framework, and technology, not a one-time cleanup effort.

- At enterprise scale and evolving businesses, manual data quality management is not viable. Automated tools handle key data quality tasks like profiling, matching, deduplication, and ongoing monitoring at the speed and consistency that manual processes cannot.

What is Data Quality?

Data quality is defined as the degree to which data fulfills the requirements of an intended purpose. Organizations store, manage, and use large volumes of data every day. If the data fails to serve its purpose, it is considered poor quality.

What counts as good quality differs by organization and use case. A customer dataset used for billing has different quality requirements than one used for segmentation. The deciding factor is always fitness for purpose. To ensure overall data quality, data elements—such as customer details, product information, and addresses—must be standardized and accurate, as these discrete pieces of information are crucial for consistency and reliable processes. Data that cannot reliably support the processes built on it is, by definition, poor quality.

Why Does Data Quality Management Matter?

Poor quality data costs the average enterprise millions per year. That figure covers direct costs like rework and failed initiatives, but the operational damage runs deeper than any single number captures. Implementing a structured data quality management program is essential for organizations to systematically address these challenges.

Operational Inefficiencies

When data is unreliable, teams spend significant portions of their working day manually checking, correcting, and reconciling records before they can use them. This is time that should be going into analysis and decision-making. For data teams and business analysts, it’s not possible to work around bad data because all of their tasks and processes depend on it. Effective data quality management is essential to maintain operational efficiency across all departments.

Flawed Decision-making

Business leaders rely on data for product, market, and resource decisions. When the underlying data is inconsistent or duplicate-ridden, every report, forecast, and model built on it carries compounding inaccuracy.

Compliance Exposure

Regulations including GDPR, HIPAA, and CCPA require accurate, current, and complete recordkeeping. Duplicate records complicate data subject access requests. Missing fields create audit gaps. Poor data quality is not just an operational problem — it is a regulatory one. Robust data quality management practices are essential for achieving regulatory compliance, ensuring data integrity and supporting organizational accountability.

Customer experience failures

Inaccurate customer data produces fragmented customer experiences: duplicate communications, incorrect addresses, mismatched records across touchpoints. These failures are visible to the customer in ways that internal data problems are not.

Understanding Data Quality Management

We covered the fundamentals of data quality, data quality issues, and how they relate to business risks. Now it’s time to see what the data quality management plan is: how can you fix and consistently manage data quality over time and reap all the benefits it can possibly serve your business. Let’s begin.

What is data quality management?

Data quality management is defined as:

Implementing a systematic framework that continuously profiles data sources, verifies the quality of information, and executes a number of processes to eliminate data quality errors – in an effort to make data more accurate, correct, valid, complete, and reliable.

Data quality assessment is a critical first step in evaluating and profiling data to identify issues, measure data quality metrics, and establish a baseline for improvement.

Since the requirements and characteristics of data quality are different for every organization, data quality management also differs between enterprises. The types of people you need to manage data quality, the metrics you need to measure it, the data quality processes you need to implement – everything depends on multiple factors, such as company size, dataset size, sources involved etc.

Here, we discuss the main pillars of data quality implementation and management that will give you a good idea about how to go about ensuring data quality at your company for your specific requirements. Data quality frameworks should be regularly revisited and updated to adapt to evolving business needs, technology, and regulations.

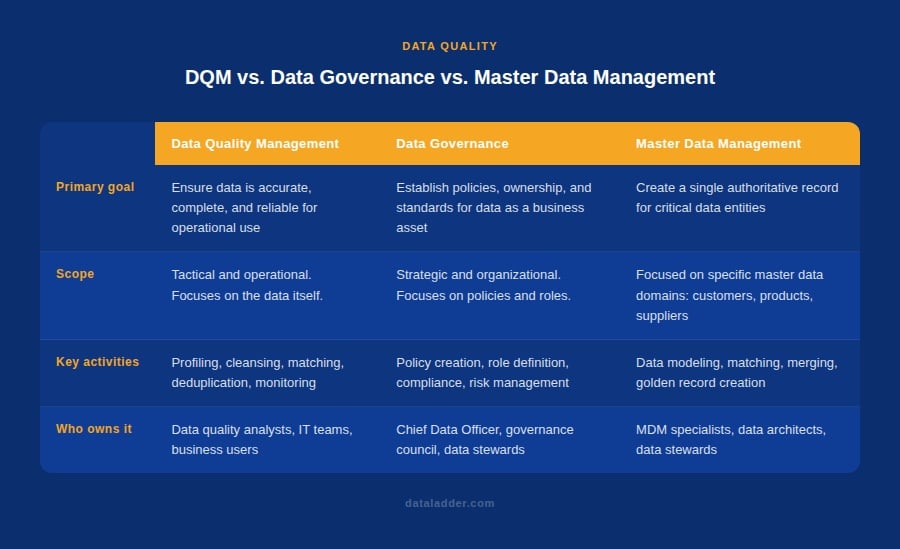

Data Quality Management vs Data Governance vs Master Data Management

These three terms are frequently used interchangeably and they should not be. They describe related but distinct disciplines, and understanding the difference matters when deciding which approach an organization actually needs.

Data quality management is the operational practice of ensuring data is accurate, complete, consistent, and reliable. It covers the day-to-day work of profiling, cleansing, matching, and deduplicating data across systems.

Data governance is the strategic framework of policies, roles, and standards that determines how data is owned, accessed, and managed across the organization. A data governance committee oversees the implementation of data governance policies and assigns roles such as data stewards, who are responsible for authoring and maintaining data quality rules, policies, and metadata to ensure data quality standards are met. It defines the rules that data quality management then enforces.

Master data management is the practice of creating and maintaining a single authoritative record for the entities that matter most to the business: customers, products, vendors, locations, and employees. It draws on both data quality and governance to produce what is commonly called a golden record.

How Do Data Quality Issues Enter the System?

There are multiple ways data quality errors can end up in your system. Let’s take a look at what they are.

1. Lack of proper data modeling

This is the first and the most significant reason behind data quality errors. Your IT team does not expend the right amount of time or resources while adopting new technology – whether it is a new web application, database system, or integration/migration between existing systems.

Data modeling helps to organize and give structure to your data assets and elements. Your data models can be susceptible to any of the following issues:

a. Lack of hierarchical constraints:

This relates to when there are no appropriate relationship constraints within your data model. For example, you have a different set of fields for Existing Customers and New Customers, but you use a generic Customer model for both, rather than having Existing Customers and New Customers as subtypes of the supertype Customer.

b. Lack of relationship cardinality:

This relates to when there is no number defined that represents the number of relations one entity can have with another. For example, one Order can only have one Discount at a time.

c. Lack of referential integrity:

This relates to when a record in one dataset refers to a record in another one that is not present. For example, the Sales table refers to a list of Product IDs that are not present in the Products table.

2. Lack of unique identifiers

This relates to when there is no way to uniquely identify a record, leading you to store duplicate records for the same entity. Records are uniquely identified by storing attributes like Social Security Number for Customers, Manufacturer Part Number for Products, etc.

3. Lack of validation constraints

This relates to when data values are not run through the required validation checks before being stored in the database. For example, checking the required fields are not missing, validating the pattern, data type, size, and format of data values, and also ensuring that they belong to a range of acceptable values.

4. Lack of integration quality

This relates to when your company has a central database that connects to multiple sources and integrates incoming data to represent a single source of information. If this setup lacks a central data quality engine for cleaning, standardizing, and merging data, then it can give rise to many data quality errors.

5. Lack of data literacy skills

Despite all the right efforts being made to protect data and its quality across datasets, a lack of data literacy skills in an organization can still x<<cause a lot of damage to your data. Employees often store wrong information as they don’t understand what certain attributes mean. Moreover, they are unaware of the consequences of their actions, such as what are the implications of updating data in a certain system or for a certain record.

6. Data entry errors

Mistyping or misspellings are one of the most common sources of data quality errors. Humans are known to make at least 400 errors while doing 10,000 data entries. This shows that even with the presence of unique identifiers, validation checks, and integrity constraints, there is a chance that human error can intervene and make your data quality deteriorate.

To onboard relevant decision makers, it is important to educate them how big and small data quality issues are impacting business. A data flaw – business risk matrix, like the one shown below, can help you to do just that.

What Are the 5 Pillars of Data Quality Management?

In this section, we look at the most important pillars of data quality management: people, measurement, processes, framework, and technology.

In this section, we look at the most important pillars of data quality management: people, measurement, processes, framework, and technology.

1. People: Who is Involved in Data Quality Management?

It is a common belief that while managing data quality across the organization, you must get approvals and buy-ins from decision-makers. But the truth is, that you need data professionals appointed at different levels of seniority to ensure your investments in data quality initiatives pay off.

Here are some roles that are either responsible, accountable, consulted, or informed about data quality control in an organization:

1. Chief Data Officer (CDO): A Chief Data Officer (CDO) is an executive-level position, solely responsible for designing strategies that enable data utilization, data quality monitoring, and data governance across the enterprise.

2. Data steward: A data steward is the go-to guy at a company for every matter related to data. They are completely hands-on in how the organization captures data, where they store it, what it means for different departments, and how its quality is maintained throughout its lifecycle.

3. Data custodian: A data custodian is responsible for the structure of data fields – including database structures and models.

4. Data analyst: A data analyst is someone capable of taking raw data and converting it into meaningful insights – especially in specific domains. One main part of data analyst is to prepare, clean, and filter the required data.

5. Other teams: These roles are considered to be data consumers, which means they use data – either in its raw form or when it is converted into actionable insights, such as sales and marketing teams, product teams, business development teams, etc.

Read more about Building a data quality team: roles and responsibilities to consider.

5. Other teams: These roles are considered to be data consumers, which means they use data – either in its raw form or when it is converted into actionable insights, such as sales and marketing teams, product teams, business development teams, etc.

2. Measurement: How is Data Quality Measured?

The second most important aspect of data quality management is its measurement. These are data characteristics and key performance indicators that validate the presence of data quality in organizational datasets. Depending on how your company uses data, these KPIs may differ. I have listed the most important data quality dimensions and the quality metric they represent:

- Accuracy: How well do data values depict reality or correctness?

- Lineage: How trustworthy is the originating source of data values?

- Semantic: Are data values true to their meaning?

- Structure: Do data values exist in the correct pattern and/or format?

- Completeness: Is your data as comprehensive as you need it to be or are there missing values?

- Consistency: Do disparate data stores have the same data values for the same records?

- Currency: Is your data acceptable up to date?

- Timeliness: How quickly is the requested data made available?

- Reasonableness: Do data values have the correct data type and size?

- Identifiability: Does every record represent a unique identity and is not a duplicate?

Read more about Data quality dimensions – 10 metrics you should be measuring.

3. Process: What are Data Quality Processes?

Since data has grown massively in the last few decades, it has become multi-variate and is measured in multiple dimensions. To fetch, fix, and improve data quality issues, you must implement a variety of data quality processes – where each one of them serves a different, valuable purpose.

These processes are essential for improving data quality by addressing specific data quality issues and implementing specific data quality rules that ensure accuracy, consistency, and completeness. Let’s take a look at the most common data quality processes that companies use to improve their data quality.

a. Data profiling

It is the process of understanding the current state of your data by uncovering hidden details about its structure and contents. A data profiling algorithm analyzes dataset columns and computes statistics for various dimensions, such as completeness, uniqueness, frequency, character, and pattern analysis, etc.

b. Data cleansing and standardization

It is the process of eliminating incorrect and invalid information present in a dataset to achieve a consistent and usable view across all data sources. It involves removing and replacing incorrect values, parsing longer columns, transforming letter cases and patterns, and merging columns, etc.

c. Data matching

Also known as record linkage and entity resolution, it is the process of comparing two or more records and identifying whether they belong to the same entity. It involves mapping the same columns, selecting columns to match on, executing match algorithms, analyzing match scores, and tuning the match algorithms to get accurate results.

d. Data deduplication

It is the process of eliminating multiple records that belong to the same entity and retaining only one record per entity. This includes analyzing the duplicate records in a group, marking records that are duplicates, and then deleting them from the dataset.

e. Data merge and survivorship

It is the process of building rules that merge duplicate records together through conditional selection and overwriting. This helps you to prevent data loss and retain maximum information from duplicates. It involved defining rules for master record selection and overwriting, executing rules, and tuning them to get accurate results.

f. Data governance

The term data governance usually refers to a collection of roles, policies, workflows, standards, and metrics, that ensure efficient data usage and security, and enable a company to reach its business objectives. It involves creating data roles and assigning permissions, designing workflows to verify information updates, ensuring data is safe from security risks, etc.

g. Address verification

It is the process of running addresses against an authoritative database – such as USPS in the US – and validating that the address is a mailable, accurate, and valid location within the country for delivering mail.

Read more about the 5 data quality processes to know before designing a DQM framework.

4. Framework: What is a Data Quality Framework?

Apart from data quality processes, another important aspect to consider while designing a data quality strategy is a data quality framework. The processes represent stand-alone techniques used to eliminate data quality issues from your datasets. A data quality framework is a systematic process that consistently monitors data quality, implements a variety of data quality processes (in a defined order), and ensures that it doesn’t deteriorate below defined thresholds. It gives more details about the data quality management process flow.

A simple data quality framework consists of four stages:

Assess: This is the first step of the framework where you need to assess the two main components: the meaning of data quality for your business and how the current data scores against it.

Design: The next step in data quality framework is to design the required business rules, by selecting the data quality processes you need and tuning them to your data, as well as deciding the architectural design of data quality functions.

Execute: The third stage of the cycle is where the execution happens. You have prepared the stage in the previous two steps, now it’s time to see how well the system actually performs.

Monitor: This is the last stage of the framework where the results are monitored. You can use advanced data profiling techniques to generate detailed performance reports.

Read more about Designing a framework for data quality management.

5. Technology: What are Data Quality Management Tools?

Despite the nature of data quality issues being quite complex, many businesses still validate data quality manually – giving way to multiple errors. Adopting a technological solution to this problem is the best way to ensure your team’s productivity and smooth implementation of a data quality framework. There are many vendors that package data quality functions in different offerings, such as:

a. Stand-alone, self-service data quality software

This type of data quality management software allows you to run a variety of data quality processes on your data. They usually come with automated data quality management or batch processing features to clean, match, and merge large amounts of data at specified times in a day. It is one of the quickest and safest ways to consolidate data records, without losing any important information since all processes are executed on a copy of data and the final data view can be transferred to a destination source.

b. Data quality API or SDK

Some vendors expose necessary data quality functions through APIs or SDKs. This helps you to integrate all data quality management features in your existing applications in real-time or runtime. Read more about Data quality API: Functions, architecture, and benefits.

c. Data quality embedded in data management tools

Some vendors embed data quality capabilities within centralized data management platforms so that everything is taken care of in the same data pipeline. Designing an end-to-end data management system with embedded data quality function requires you to conduct detailed planning and analysis as well as involve key stakeholders at every step of the process. Such systems are often packaged as master data management solutions.

How data quality management differs from master data management?

Email The term ‘master data management’ refers to a collection of best practices for data management – that involves data integration, data quality, and data governance. This means that data quality and master data management are not opposites of each other; rather, they are complements. MDM solutions contain some extra capabilities in addition to data quality management features.

This definitely makes MDM a more complex and resource-intensive solution to implement something to consider while choosing between the two approaches.

d. Custom in-house solutions

Despite various data quality and master data management solutions present in the market, many businesses invest in developing an in-house solution for their custom data needs. Although this may sound very promising, businesses often end up wasting a great number of resources – time and money – in this process. The development of such a solution may be easier to implement, but it is almost impossible to maintain over time.

To know more about this, you can read our whitepaper: Why in-house data quality projects fail.

How to Build and Implement a Data Quality Management Framework

A data quality management framework follows a defined sequence. The exact scope and timeline vary by organization, but the steps themselves do not. The process begins with understanding and profiling data assets, followed by a thorough quality assessment and ongoing data quality activities.

Step 1: Define what data quality means for your organization

Identify which data domains are most critical to your business processes. This generally includes data on customers, vendors, products, and employees. Define the quality threshold each domain needs to meet and the specific fields where quality failures would cause operational problems. This step will help you define data quality rules for your organization and help you maintain data accuracy and integrity as you move forward. It is also essential to assign responsibility to specific teams or individuals for maintaining data accuracy and resolving issues.

Step 2: Profile your source data

Run a data profiling pass against every source system before any cleansing or migration work begins. Profiling reveals all of the data issues that exist in your datasets and help you truly assess data quality. You’ll find out the rate of data uniqueness, how many missing values exist in your datasets, null rates on mandatory fields, format inconsistencies, and the volume of records that appear obsolete. Without data profiling, it becomes nearly impossible to maintain high quality data in the organization especially as it undergoes cleansing or transformation.

Step 3: Standardize formats across sources

Normalize dates, addresses, company names, and phone numbers to consistent formats before matching begins. This does not only help maintain data integrity but also ensures that issues do not keep returning as your business evolves. Attempting to deduplicate records before standardization means comparing “123 Main St.” against “123 Main Street” as different entries and missing the duplicate entirely putting all of your data quality efforts in vain.

Step 4: Match and deduplicate

Use a combination of exact and fuzzy matching to identify records across sources that refer to the same real-world entity. You should also apply survivorship rules based on key data quality metrics to consolidate matched records into single authoritative master records, retaining the most complete and most trusted field values from each source. This prevents low quality data from moving into target systems and can prevent data quality issues in the future.

Step 5: Establish governance

Data governance is a key component of effective data quality management, and implementing comprehensive data governance policies is essential for establishing compliance frameworks, defining roles and responsibilities, and addressing root causes of data quality issues. In order to create a robust data governance framework, data validation rules are defined at the point of entry.

You should also schedule regular profiling audits against the quality thresholds established in step one. Without ongoing governance, including strong data governance policies, it becomes difficult to maintain data integrity, as the work done in steps two through four needs to be repeated within months of go-live to maintain data quality.

Real World Examples of Data Quality Management

In this final section of our guide, we will look at some data quality use cases and see how renowned brands are utilizing data cleansing and matching tools for managing the quality of their data and see what they have to say about it.

1. Data Quality management in retail

Buckle is a leading upscale retailer of denim, sportswear, outerwear, and footwear, accessories, with over 450 stores in 43 states. Buckle was dealing with the challenge of sorting through large amounts of data records from hundreds of stores. The main task at hand was eliminating all the duplicate information that had been loaded into their current iSeries DB2 system. They were looking for an efficient way to remove duplicate data, which accounted for approximately 10 million records.

DataMatch Enterprise provided a usable and more efficient solution for Buckle. The company was able to run a large number of records through the deduplication process as one project using a single software tool as opposed to using several different methods.

2. Data quality management in healthcare

St. John Associates provides placement and recruiting services in Cardiology, Emergency Medicine, Gastroenterology, Neurological Surgery, Neurology, Orthopedic Surgery, and other fields. With a growing database of recruitment candidates, St. John Associates needed a way to dedupe, clean and match records. After several years of performing this task manually, the company decided it was time to deploy a tool to reduce the time spent on cleaning records.

With DataMatch Enterprise, St. John Associates was able to perform an initial data cleansing operation, finding, merging and purging hundreds of thousands of records in a short period of time. DataMatch helped speed up the process of deduplication through fuzzy matching algorithms and made sorting through data fields to find null information easier. It also eliminated the need for manual entry, enabling users to export changes and upload them as needed.

3. Data Quality management in financial services

Bell Bank is one of the largest independently owned banks in the nation, with assets of more than $6 billion and business in all 50 states. As a large private bank, Bell Bank deals with many vendor partners and dozens of service lines – from mortgage to insurance, from retirement to wealth management and many more. With information siloed away and stored in disparate data sources, the bank found it challenging to get a single, consolidated view of its customers; not to mention, they also were incurring unnecessary expenses as a result of sending multiple mails to one vendor or customer.

DataMatch Enterprise forms a critical part of the bank’s larger in-house data management solution, allowing them to easily group results and hand back the list of records of all customer records that are believed to be of one entity. This consolidated view will help the bank truly understand their customer’s association with the bank and the steps they can take to further strengthen this association.

4. Data quality management in sales and marketing

TurnKey Auto Events conducts high-volume car buying campaigns for automotive dealers nationwide. They produce events that compel car buyers to attend and purchase vehicles. As a service provider that provides sales leads for automotive vendors, TurnKey Marketing was looking to receive credit for additional sales procured with the various dealerships they partner with.

By being able to match sales with the multitude of potential prospects they speak to daily, they receive sales credit (and earn money) for each lead. Using DataMatch, Data Ladder’s sophisticated data matching product, the company was able to match records from several sources. From there they were able to create a bird’s eye view of a potential car sale over time.

5. Data quality management in education

West Virginia University is the state’s only research, doctoral degree-granting, land-grant university. The school offers nearly 200-degree programs at the undergraduate, graduate, doctoral, and professional levels. They were tasked with assessing the long-term impacts of certain medical conditions on patients over an extended period of time. The data for the medical conditions and the current health records provided by the state exist in separate systems.

Using DataMatch, Data Ladder’s flagship data cleansing product, the university was able to clean records from several systems containing the required information. From there they were able to create a unified view of the patient over time.

Frequently Asked Questions About Data Quality Management

What is data quality management?

Data quality management is the ongoing process of ensuring that an organization’s data is accurate, complete, consistent, valid, timely, and unique enough to reliably support the operations and decisions built on it. It covers profiling, cleansing, matching, deduplication, and governance across all data sources.

What are the main causes of poor data quality?

The most common causes are missing validation rules at the point of data entry, inconsistent formats inherited from multiple source systems, absence of unique identifiers that allow duplicate records to accumulate, insufficient data modeling constraints, and manual entry errors.

What is the difference between data quality management and data governance?

Data governance sets the policies, roles, and standards for managing data as a business asset. Data quality management executes against those policies — it is the tactical work of profiling, cleansing, and maintaining the actual data. Governance without quality management means well-documented bad data. Quality management without governance means clean data that degrades again within months.

How is data quality measured?

Data quality is measured against six dimensions: accuracy, completeness, consistency, timeliness, uniqueness, and validity. Each dimension is tracked through specific KPIs, such as duplicate record rate, null field rate, format conformance rate, and record freshness. Data profiling tools generate these measurements automatically against defined thresholds.

Who is responsible for data quality in an organization?

Responsibility is distributed. Business functions own the quality of their data domains because they define what correct looks like in their operational context. IT provides the infrastructure and tooling. Data stewards serve as the day-to-day owners of specific domains. A Chief Data Officer sets strategy at the executive level. Without named ownership per domain, quality problems accumulate without a clear path to resolution.

How long does it take to implement a data quality management program?

A focused data quality initiative on a single domain with a modern tool can produce measurable results within weeks. An enterprise-wide program covering multiple domains and source systems typically takes three to six months to establish, with ongoing maintenance and governance continuing indefinitely. The timeline depends on data volume, the number of source systems, and the severity of existing quality issues.

What is the difference between data deduplication and data matching?

Data matching identifies records across datasets that refer to the same real-world entity, including near-identical records that exact matching would miss. Data deduplication consolidates those matched records into single authoritative master records. Matching identifies the problem. Deduplication resolves it.

The Final Word

Business leaders understand the importance of data – from routine operations to advanced business intelligence, it is utilized everywhere. But most teams working with data spend extra hours because of duplicate work, lack of data knowledge, and faulty results. And all these issues arise due to poor or no management of data quality.

Investing in data quality tools, such as DataMatch Enterprise, will definitely help you to get started with data quality management. DataMatch will take you through different stages of data cleansing and matching. Starting from importing data from various sources, it guides you through data profiling, cleansing, standardization, and deduplication. On top of that, its address verification module helps you to verify addresses against the official USPS.

DataMatch also offers scheduling features for batch processing records or you can utilize its API to integrate data cleansing or matching functions in custom applications and get instant results.

Book a demo today or download a free trial to learn more about how we can help you to get the most out of your data.