List matching software

Trusted By

Trusted By

Definition

What is list matching?

List matching is the process of combining lists residing at disparate sources, identifying rows that represent the same individual, and merging those rows to attain a complete and concise list where each row represents a real-word object.

List matching is commonly used in getting a holistic view of contacts and other entities by consolidating their information spread across multiple vendor, client, and partner datapoints received at regular intervals. By establishing unique IDs and match definitions to identify mis-keyed, abbreviated, and phonetic matches within and across multiple lists, companies can determine golden records and remove conflicting and duplicate entries.

Process

How does list matching work?

Combine and profile lists

Bring lists together at one place and build quick data quality summary report to highlight missing, incomplete, or invalid values present and identify potential list cleansing opportunities.

Perform list matching

Match rows within and across lists and identify potential rows that relate to the same individual or entity. Lists usually lack uniquely identifying attributes, and so a combination of intelligent fuzzy matching algorithms may be needed to increase list matching accuracy.

Finalize and export list

Export the final list in the format of your choice or load it back to the required database, cloud store, or other web application.

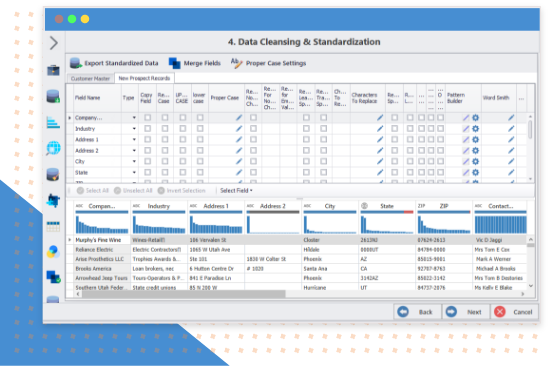

Clean and standardize lists

Perform data cleansing activities to remove statistical and structural anomalies from rows of lists, such as removing leading and trailing spaces, replacing null values, fixing punctuation errors, and more.

Merge and deduplicate list rows

Merge data from duplicate rows with the help of deduplication and survivorship rules, so that maximum information is retained and combined into one golden list which represents complete view of all individuals.

Finalize and export list

Export the final list in the format of your choice or load it back to the required database, cloud store, or other web application.

Solution

Let Data Ladder handle your list matching

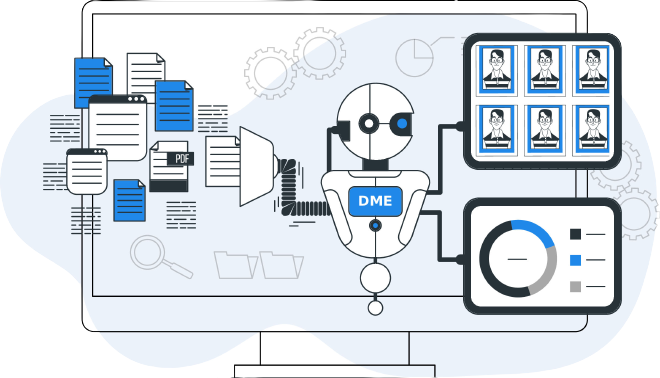

See DataMatch Enterprise at work

DataMatch Enterprise is a highly visual and intuitive list matching software application, specifically designed to solve customer and contact data quality issues. This is achieved by automating the list matching process and relieving you of the manual effort required to match lists containing more than a million rows. DME intelligently identifies acronyms, name reversals and variations, phonetic words, misspellings, as well as abbreviations.

DataMatch leverages multiple industry-standard and proprietary algorithms to detect phonetic, fuzzy, miskeyed, and abbreviated variations in your lists and databases. The suite allows you to build scalable configurations for data standardization, deduplication, record linkage, and enrichment across lists from multiple sources, such as Excel, text files, SQL, Oracle, ODBC, etc.

Business benefits

How can list matching benefit you?

Multiple matching algorithms

Enhance matching possibilities for diverse data types by selecting from exact, phonetic, fuzzy, or alphanumeric matching algorithms.

Easily configure match results

Rerun match algorithms with varying threshold levels and choose the deciding value that ensures least number of false positive and negatives.

Flexible matching criteria

Match lists by searching inter, intra, or both data matches and create logical AND/OR expressions based on matching probabilities.

Meet Compliance Requirements

Ensure accurate match results in vendor and transaction lists to effectively comply with GDPR, CCPA, and HIPAA.

Real-time matching

Automate matching processes across and within datasets through real-time API flows to cut hours off project timelines.

Prep data for survivorship

Define custom survivorship rules for duplicate and master records such as enriching empty values with others and merging unique values into one.

Let’s compare

How accurate is our solution?

10% chance of losing key personnel; over 5 years, half of the implementations lose the core member who ran and understood the matching program.

Detailed tests were completed on 15 different product comparisons with university, government, and private companies (80K to 8M records), and these results were found: (Note: this includes the effect of false positives)

| Features of the solution | Data Ladder | IBM Quality Stage | SAS Dataflux | In-House Solutions | Comments |

|---|---|---|---|---|---|

| Match Accuracy (Between 40K to 8M record samples) | 96% | 91% | 84% | 65-85% | Multi-threaded, in-memory, no-SQL processing to optimize for speed and accuracy. Speed is important, because the more match iterations you can run, the more accurate your results will be. |

| Software Speed | Very Fast | Fast | Fast | Slow | A metric for ease of use. Here speed indicates time to first result, not necessary full cleansing. |

| Time to First Result | 15 Minutes | 2 Months+ | 2 Months+ | 3 Months+ | |

| Purchasing/Licensing Costing | 80 to 95% Below Competition | $370K+ | $220K+ | $250K+ | Includes base license costs. |

Frequently asked questions

Got more questions? Check this out

ready? let's go

Try now or get a demo with a expert!

"*" indicates required fields