Last Updated on January 11, 2026

Data quality (DQ) and data quality management (DQM) is emerging as a needed business strategy in enterprise-level organizations. Although not a new concept (data quality has been a concern ever since the use of data warehouses), it’s gaining more attention as companies begin to discover the consequences of poor data on customer experiences and operational effectiveness.

Numerous studies conducted by Gartner, Experian, the Data Warehousing Institute reveal data quality problems cost U.S. businesses up to 600 billion dollars a year.

Despite the consequences of poor data quality, very few organizations adopt a data quality management plan to assess, monitor, and improve the quality of their data. The lack of strategic planning and limitations in terms of resources, tools, and processes hold companies back. But it doesn’t necessarily have to be this way.

In this quick post, we’ll help you understand the first critical steps you can take to create a data quality management plan that will improve not just your data but also your operational efficiency.

Why is DQM Important?

82% of companies we’ve worked with reported poor data quality as their number one business challenge. For most of these organizations, their data is a valuable, intellectual asset that they are eager to use, but are struggling to make sense of.

Although there are hundreds of reasons to prioritize data quality management, a key reason that tops it all is your company’s bottom line.

At the end of the day, it all boils down to how much money you’re losing because of the resources, expenses and workarounds that need to be done to fix the consequences of poor quality data.

For instance:

- Every call a sales rep makes to an unqualified or poorly defined lead information is an expense.

- Every campaign with high email bounce rates because of obsolete email addresses is wasted opportunities (and resource time).

- Data decayed at 2% per month can result in thousands of lost opportunities

- Lists or records with duplicates and redundant data will be the cause of skewed data and inaccurate insights.

- Lists or records not matched against data compliance regulations can increase the risks of fines and penalties.

Over time, these seemingly minor problems balloon into recurring expenses that affect the bottom line – not to mention, lost deals, lost customers and lost credibility.

Globally, the average amount of inaccurate data has risen to 22 percent from 17 percent just 12 months ago. U.S. organizations actually believe they have the highest percentage of inaccurate data at 25 percent.

How to Determine the State of Your Company’s Data Quality?

The quality of data is fixed at the moment of creation. But we don’t actually judge that quality until the moment of use. If the quality is poor, people use short cuts around the data or fix the errors themselves. Not the right way to go about it. There are way too many dependencies that get affected because of poorly entered data.

So if you’re wondering why your reports are inconsistent, why your teams have to spend days to pull information from data, why your marketing campaigns are ineffective or why your organization is at the risk of a data security breach, it’s time to evaluate your company’s data quality.

To do so, we recommend a simple process.

- Assess Your CRM Data: A place to start is your CRM data. Have your team evaluate the quality of your data and determine the ratio of duplicates, messy or unstructured fields and invalid information in a list of 500 contacts. Look for inconsistencies in structures such as using a 24-hour time structure vs a 12-hour time structure. Look for incomplete information, such as null phone number fields. You can also hire a third-party data doctor to review the data and give you a report on data health.

- Too Many Sources of Data: An Oreily report suggests too many data sources as a primary data quality issue. Is your data stored in multiple CRMs? Do you have multiple systems to store customer information? Are you struggling to get a unified view of your customer journey and interaction with your company? Over 90% of clients we’ve worked with report disparate data sources as one of their most frustrating challenges. There are way too many systems storing information that gets decayed or redundant over time (usually just within months) and companies just can’t seem to consolidate or control this data. Even those that hired expert teams could not manage the accuracy of their information, despite spending millions of dollars bringing the best minds on board.

- Poor Insights and Business Intelligence Reports:

Data quality has transitioned from an operational function that was associated with efficiency and cost savings, to a strategic function powering consumer insight and overarching business intelligence. CIOs of companies we’ve spoken to always associate the need for quality data to customer specific goals such as personalization, better experiences and enhanced customer services. If you’re struggling with poor customer insights such as the difficulty of tracking customers across different touchpoints or understanding their pain points, it can be attributed to the poor use of data. Similarly, business intelligence reports that are based on outdated data – such as the common practice of using last quarter or last year’s sales data to determine this year’s growth or opportunities will be ineffective. The market is growing at an exponential pace. Data you had a year ago may not be accurate presently. Hence, data quality needs to be a real-time goal. Your organization needs data it can access in real-time.

Who is Responsible for a Data Quality Management Plan?

Data quality has moved on from being an IT problem to a socio-technological problem. Systems and processes can help to an extent, but it is incumbent on the organization to inculcate a culture-change that prioritizes data quality. After all, people and processes are almost always implicated in both the creation and the perpetuation of data quality issues.

Mandatory data quality training must be for all employees handling data. From sales reps to marketing managers to customer service and account managers – everyone needs to be trained on their role in the process. Moreover, they must be trained to recognize data quality issues and how to resolve the problem within defined data governance parameters.

For instance, it’s not uncommon for sales managers to create customized fields in a CRM for their own insights. Or, business users making changes directly in a record to save time and effort. All these problems cause significant data quality issues down the line. The customized fields used by that one specific manager may be rendered useless if the fields do not offer any critical value to the team as a whole. The changes made by the business manager is not replicated in other systems so that the time of analysis, the company will have conflicting records.

Digital technologies, automation tools etc can only do as much – you need your people to be aligned to the objective to see real progress. Data quality, therefore, is everyone’s responsibility.

How Do You Start with a Data Quality Management Initiative?

There are two approaches to data quality management – the active approach and the passive approach.

With the active approach, data quality is fixed at the point of data creation. This would mean implementing front-end controls (such as using dropdown menus instead of manual keying of data), tools governing software entry, and training programs for all.

The passive approach is using data quality tools to clean and normalize data. Consolidation of data from multiple sources and deduplicating it to remove redundancy is a key part of this approach. Additional activities include:

- Data match to remove duplicates and consolidate lists

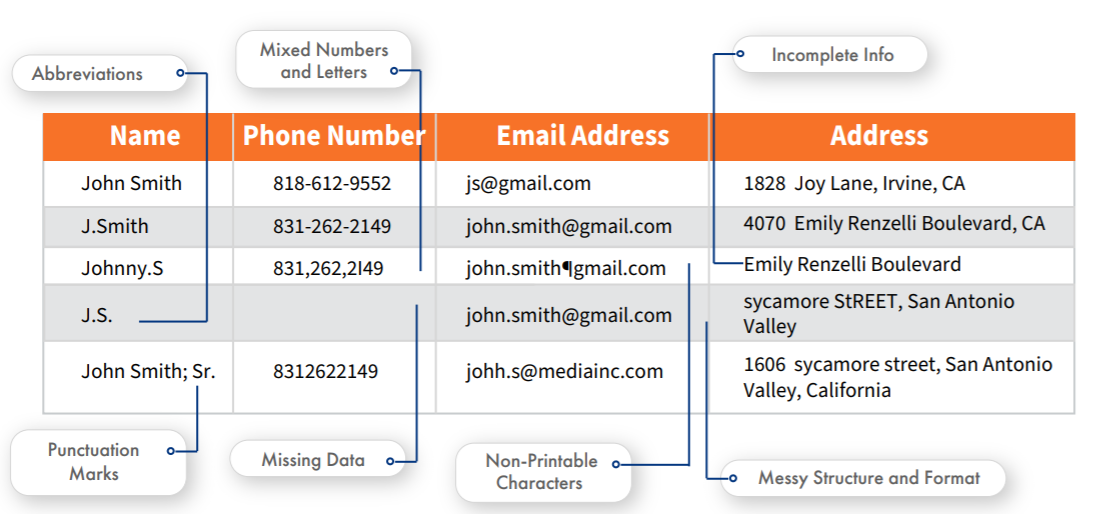

- Data normalization if your data has severe structural issues as given in the image below

- Data merge/purge to create golden records

- Data survivorship to overwrite all information with new information

Choosing the right approach will depend on the problem you’re currently facing and your budget. Most companies have a strong active approach well in place. What they struggle with is with the back-end of things – such as trying to consolidate lists to get homogenized customer data, or trying to match lists with U.S. sanctions or compliance lists. Some struggle with data quality during or after a company merger and acquisition.

Regardless of the approach or the situation, one thing is clear – data quality management is a consistent effort and not a one-time event.

To maximize return on investment, companies must implement people, process, and technology programs to continually monitor and improve data quality to maintain consistent, accurate, reliable, and trusted data.

How Data Ladder Can Help You in Creating a DQM Process?

Over the years, we’ve helped 4,500+ Fortune 500 companies including Coca Cola, Deloitte, HP to implement a DQM process. The company’s flagship data quality tool, DataMatch Enterprise, follows a systematic framework that allows a step-by-step treatment of data.

Being an on-premises tool, DME can be used on your cloud server or the desktop with the same results in terms of speed and efficiency. Additionally, it also allows for integration to over 500 data sources, which means you can directly plug in your data source to fix data without having to export it to any other format.

DME is a preferred tool of choice for organizations that need data cleansing and normalization as part of a data match initiative, which means with one tool, you get everything you need to make sense of your data.

To Conclude – To be ahead of the game, create a DQM plan now.

A competitive landscape charged by the dynamic power of data demands businesses to pay attention to data quality. It’s no longer about fixing numbers and alphabets – it’s quite literally about finding hidden opportunities, discovering key insights, maximizing returns on investments and paving the way forward to include AI and ML technologies. For all this to happen, you need data you can trust.