Last Updated on January 5, 2026

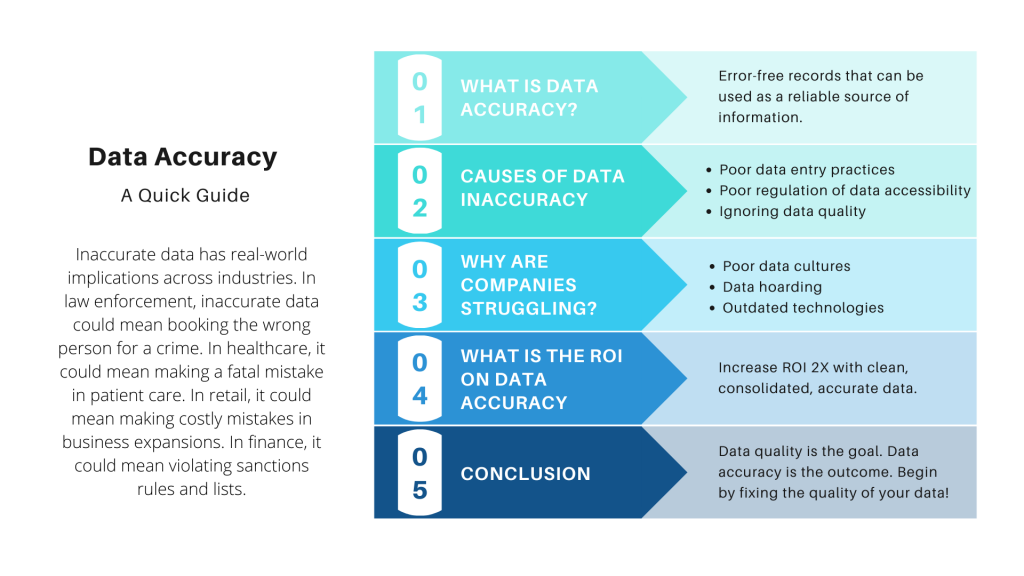

Inaccurate data has real-world implications across industries. In law enforcement, inaccurate data could mean booking the wrong person for a crime. In healthcare, it could mean making a fatal mistake in patient care. Within the retail industry, it could mean making costly mistakes in business expansions. In finance, the lack of data accuracy could mean violating sanctions rules and lists.

Data accuracy is no longer “just” a component of data quality frameworks. It is quite literally the most important characteristic that makes data usable and purposeful.

In this detailed guide, I’ll be covering everything you need to know about:

- What is data accuracy?

- What the causes of data inaccuracy

- The high cost of data inaccuracy

- Why it’s essential to maintain data accuracy

- Why are companies struggling with data quality and data accuracy?

- What steps you can take to improve data accuracy

- Some examples and important case studies

Let’s dig in.

What is Data Accuracy?

Data accuracy refers to error-free records that can be used as a reliable source of information.

In data management, data accuracy is the first and critical component/standard of the data quality framework. A popularly cited work, Data Quality: The Accuracy Dimension by Jack Olson explains form and content as two of the most important characteristics of data accuracy.

Here’s an example:

In the US database, dates follow the MM/DD/YYYY format, whereas, in the EU database and other countries of the world, it’s DD/MM/YYYY. If 09/10/2020 was converted to content, which of the two do you think would be accurate?

Is it September 10th or is it 9th October? This is the classic meaning vs form problem that threatens data accuracy. And this generally happens due to the lack of data standardization and rules.

It’s imperative that any data stored in a data warehouse is accurate and appropriate for use. It impacts an organization’s business intelligence, forecasting, budgeting, and other critical activities. If the data is irrelevant, incorrect, incomplete, and inaccurate it can disrupt processes & hamper operational efficiency.

Data Accuracy vs. Data Integrity

Data accuracy and data integrity are closely related but distinct concepts.

- Data accuracy ensures that data is correct and free from errors. It reflects the truth of the data values.

- Data integrity, on the other hand, focuses on maintaining the reliability and trustworthiness of data over its lifecycle. It ensures that data remains consistent, unaltered, and protected from unauthorized changes.

While accuracy is about the correctness of data, integrity is about the structure, completeness, and safekeeping of that data. Both are essential for high-quality data but address different aspects of data management.

What are the Causes of Data Inaccuracy?

The causes of data inaccuracy are many. Some of the most common causes are:

Poor Data Entry Practices:

Data inaccuracy is the outcome of poor data entry practices. An organization that does not have data governance in place will see data entered in multiple formats, styles and varieties. For instance, one customer’s name may be written in three different ways by three different reps. Worse, data acquired from social media is highly prone to mistakes, typos and copy/paste errors.

Not Regulating Data Accessibility:

The CRM is a good example of this point. Accessed simultaneously by sales, marketing, customer service, and account managers, CRMs can become a hotbed of duplicated, inconsistent, inaccurate data. For instance, a marketing rep may want to verify the client’s company name before publishing a case study, only to see an incorrect spelling or a short form of the name in the Company Name field, that was perhaps typed or modified by a sales rep. The marketing rep will have to go through multiple verification rounds to fix this error. Worse, the client may end up pointing the error if the case study was published without verification.

Data Quality is Not Usually Addressed:

Teams are too busy with selling, marketing and promotion to think about incorrect information in the data set. Leadership is too busy thinking of investments in cloud, big data systems, fancy software and technologies to worry about data. IT teams are too busy helping leadership in ‘transforming’ to fret about disparate, duplicate, inaccurate data. Data quality or accuracy is not a boardroom discussion matter. It only comes into the spotlight when something goes drastically wrong like a flawed report or an ineffective marketing campaign.

All these causes lead to losses in millions.

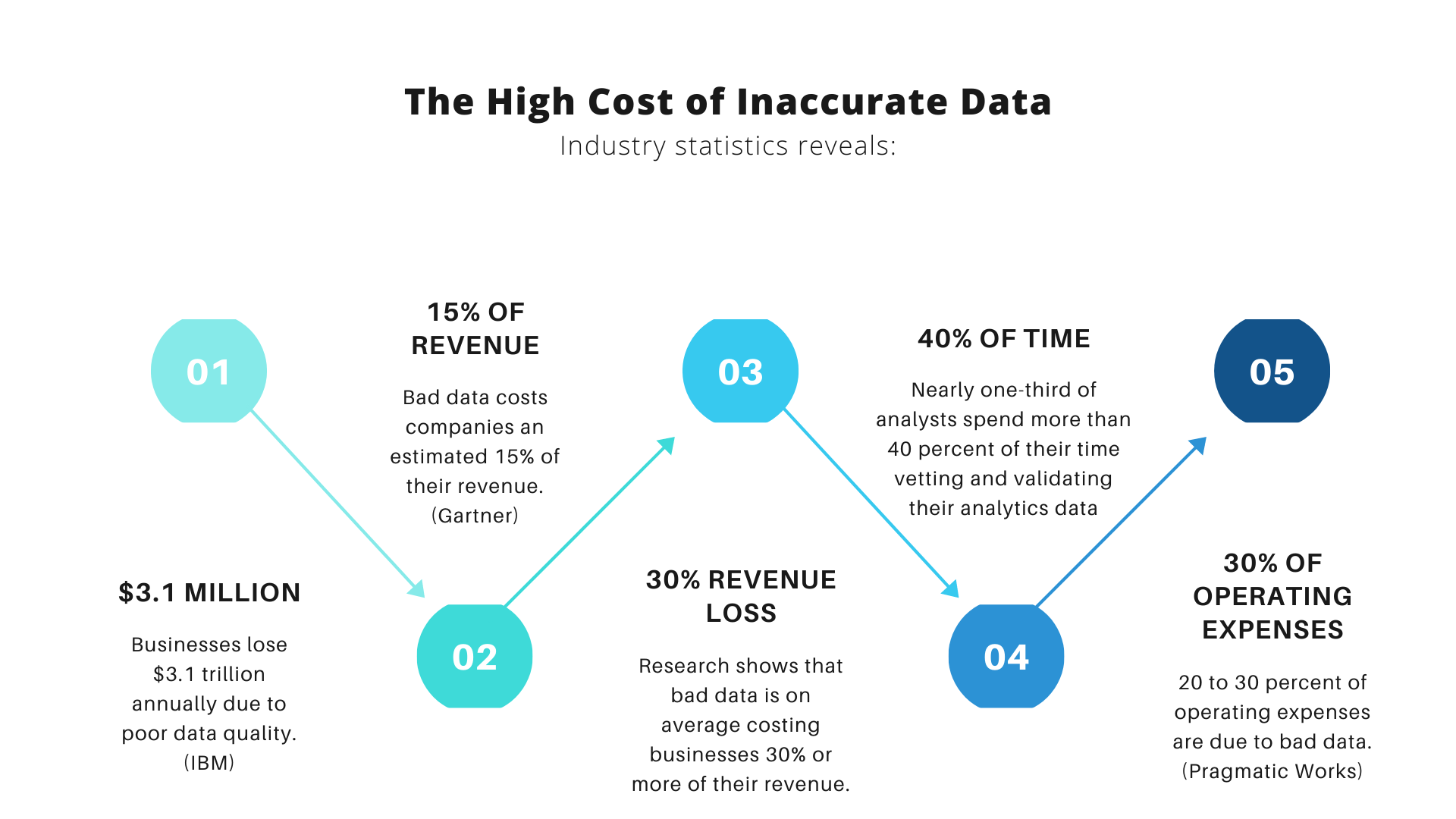

The High Cost of Inaccurate Data

Some statistics to drive home the point.

- Bad data costs companies an estimated 15% of their revenue. (Gartner)

- The average financial impact of poor data quality on organizations is $9.7 million per year. (Gartner)

- In the US alone, businesses lose $3.1 trillion annually due to poor data quality. (IBM)

- Multiple research reports have shown that bad data is on average costing businesses 30% or more of their revenue.

- Nearly one-third of analysts spend more than 40 percent of their time vetting and validating their analytics data. (Forrester)

- Knowledge workers waste 50% of their time in hidden data factories, hunting for data, finding and correcting errors, and searching for confirmatory sources for data they don’t trust. (HBR)

-

- 28% of those who have had problems delivering email say that customer service has suffered as a result of bad data. (E-Consultancy)

- 20 to 30 percent of operating expenses are due to bad data. (Pragmatic Works)

These statistics prove that inaccurate, poor data is a persistent problem in most organizations and one that has a tremendous impact on ROI, company reputation, and customer confidence.

Why It’s Essential to Maintain Data Accuracy

Insights. Analytics. Intelligence.

The three primary goals companies want to achieve with data. This is not achieved by more data. It’s achieved by accurate, complete, timely, reliable data.

It is through these goals that a business makes key decisions such as:

- Expansion to a new market

- Launching a new service

- Understanding market position

- Understanding competitors

- Understanding customers & personalizing customer services

- Enabling efficient company processes

… and so much more. Depending on the industry, data accuracy can make or break businesses. The real-world implications of inaccurate data cannot be ignored.

Establishing data quality standards for business success

Download this whitepaper and learn the struggle of hosting data in Salesforce, Fusion, Excel, and other asset management systems, and how data quality is the key factor to business success.

DownloadWhy are companies struggling with data quality and data accuracy?

Millions are being invested in data management solutions. Yet, an HBR study involving 75 executives reveals only 3% found that they had accurate data within the acceptable range of 97 or more correct records out of 100.

Companies are struggling with data quality issues and maintaining data accuracy because their focus is only on gathering more data, instead of making current data usable.

In our experience with 4,500+ clients, there are three essential roadblocks preventing companies from maintaining accurate records.

Poor Data Culture:

Companies have not yet truly embraced a data-driven culture. There is significant investment in technologies but little to no investment in data awareness training. Employees are mostly oblivious to concepts like data quality or data accuracy. For a long now, these practices have been restricted to the IT department. When it comes to customer data, business employees make changes at will with no adherence to any defined standards or data quality protocols. These gaps make it impossible for companies to achieve data accuracy, therefore compromising on data integrity.

Data Hoarding Instead of Being Data-Driven:

There’s really no end to data hoarding. Companies are spending millions in big data technologies gathering more data every passing day. But there is no system in place to make sense of that data. There are no resources available to clean, sort, manage the data in time. There is no automation and definitely no processes put in place to resolve data quality issues which leads to the third obstacle.

A Stubborn Reliance on Outdated Methods and Technologies:

In most organizations today, data is still manually prepared using Excel, SQL or ETL tools. All of which are incapable of handling the complexities of modern data – especially customer data obtained via social media, third-party vendors or web forms, or chatbots. Rife with errors, inaccuracies, and oddities, this data cannot be manually treated or prepared as it would take months for a company to clean and match thousands of rows of data.

Not acknowledging the urgent need to ensure data quality hampers your progress and affects your ROI – which leads to our next important point below.

How to Calculate the ROI on Data Accuracy

ROI determines all if not most corporate decisions. Like every other process, we will tie our best to justify spending on data quality by measuring its ROI and most executives will use a traditional approach to this:

How much will we make in $ if we spend $ in fixing our data?

Well, here’s a non-traditional answer.

You increase your ROI when:

- Your company *DOES NOT* make mistakes because of bad data.

- You don’t spend every dollar fixing every record.

- Your teams have access to a clean, centralized data source to work with.

- The benefit of fixing data outweighs the cost of covering up mistakes and inefficiencies caused by bad data.

- You make confident decisions based on insights and intelligence reports derived from accurate data.

Fact is, the ROI of any data quality initiative is elusive. But the cost of poor data is pretty much evident. Businesses lose millions of dollars annually because of duplicates, outdated data, incomplete data, mismatched data, inaccessible and disparate data.

Here’s an example:

A subscription-based online learning company needs to match a million records obtained from three sources: lead forms, CRM, customer service.

Using traditional ETL tools, the company performs the match but ends up with 3.5% of false negatives and 6.8% of false positives. This figure alone has costed the company hundreds of dollars in sales, manpower hours (in manually reviewing each false negative and positive). If the company had undertaken a data quality initiative serious, they would have prevented 11% of their total records from being wasted.

11% of a million = 11,0000

Assuming each customer pays $100

That’s 11,0000 x 100 = 11000000

Eleven million in wasted revenue. That’s a huge blow to any company’s profitability.

Ready to do what’s necessary to improve data accuracy?

Here’s what you can start with.

What Immediate Steps Can You Take to Improve Data Accuracy?

You may be tempted to hire a data analyst. Or perhaps change your CRM. Or maybe task your IT teams (again) to come up with a solution.

Stop.

None of this is going to work.

So, what will?

Some immediate steps I recommend would be:

Conduct a data quality audit:

Find out the top five issues affecting your data quality. Some of the most common issues you’ll find would be duplicates, incomplete information and data stored in multiple silos with more than half of them either obsolete or long lost forgotten.

Measure the estimated impact:

Say you have a thousand new leads this month. How many of those leads are actually usable? If it’s just a two, or three hundred, you’re losing revenue.

Measure the manpower needed to fix this data:

How much time are your reps spending in verifying and fixing information? Are they doing it manually? How many phone calls are being made and what is the average duration of each call? Assess the cost of manually fixing this data. If it’s taking your team a month to verify, clean, dedupe and fix a 1,000-row data set, you need to look for a faster, more automated solution.

Opt for an automated solution:

While auditing your data, you might realize you need a data match solution that can help you remove duplicates and perhaps consolidate multiple data sets from multiple sources into a consolidate, golden record. Most organizations use Data Ladder’s DataMatch Enterprise solution for data matching and to dedupe data. Along with that, they also get to profile, clean, standardize and prepare their data.

Hire a data analyst to manage data preparation:

You may think of hiring a data analyst and let them do the cleaning, but that’s hardly the right use of their skills. Your data analyst must be empowered with the right tools and resources to help them help you get accurate data. They must be the thinkers and the strategist while the solution you equip them with is the doer. Your analyst must not spend 80% of their time doing janitorial work (experts claim data cleansing as data janitorial work and that’s not fun!). Let the solutions do the cleaning and let your analysts do the thinking!

Focus only on specific data sets to fix as opposed to a whole data source:

It’s impossible to perform a blanket operation on your entire data source or database. Not only will that be useless, but it will also be a waste of effort. Instead, focus on optimizing data you need for immediate tasks – such as cleaning data of the last six months to prepare for a bi-annual report, or data of the last quarter to prepare a promotional campaign. As you focus on fixing the important data first, you’ll gradually get around to sorting other data and get to decide what you want to keep and what you want to discard.

With these immediate steps, you can prepare your teams to handle essential business operations such as an upcoming migration initiative, a major promotional campaign or a business intelligence report. The fundamental goal to possessing accurate data is to ensure data integrity. Your data can make or break your business. Do you care enough to fix it?

10 things to check when profiling your data

Data profiling is a crucial part of data conversion, migration and data quality projects. Download this whitepaper and find out the ten things to check when profiling your data.

DownloadCleveland Brothers Case Study

Cleveland Brothers Equipment Company Inc. operates as an equipment dealer and supports a number of industries that include construction, agriculture, power generation, landscaping and more. This means the company deals with multiple customer data sets coming from multiple sources, with multiple interests and needs.

The company needed a data quality management solution that could allow them to dedupe data, cross-reference contact information such as names, phone numbers, billing addresses, and company names. They also needed the solution to help them with data cleansing and data standardization.

DataMatch Enterprise was the company’s tool of choice that helped them perform several key operations at once and because the tool is easy to use and requires no additional programming expertise, even the company’s business resources were able to prepare data easily.

Cleveland Brothers Engineering uses DataMatch Enterprise

Cleveland Brothers Engineering efficiently matches multiple lists to eliminates duplicate records.

Read case studyKey Performance Indicators (KPIs) for Measuring Data Accuracy

To ensure your data is accurate, track specific KPIs. Start with:

- Error rate – The percentage of data entries that are incorrect or inaccurate.

- Data consistency – The uniformity of data across different systems or datasets.

- Data completeness – The extent to which all required data is present.

- Timeliness – The relevance of data based on how up to date it is.

- Validity – The degree to which data adheres to predefined formats and rules.

Together, these KPIs paint a clear picture of your data’s accuracy. Use them to spot issues and drive improvements.

Data Accuracy Best Practices

Ensuring data accuracy is crucial for any organization. Follow these best practices:

- Automate Validation – Use automated tools to check data for errors in real time. This helps catch mistakes early.

- Standardize Data Entry – Implement uniform procedures for data input. Consistent formats reduce errors.

- Regular Audits – Schedule frequent data reviews. Audits help identify inaccuracies before they escalate.

- Train Your Team – Educate employees on the importance of accurate data and the correct methods for handling it.

- Use Quality Software – Invest in data management tools designed to maintain accuracy. These tools often include features for error detection, consistency checks, and validation rules.

- Enforce Data Governance – Establish clear guidelines and responsibilities for data management. Proper governance ensures that everyone in the organization follows best practices.

By implementing these steps, your organization can maintain high data accuracy and make better, data-driven decisions.

Trends and Future Outlook

Data accuracy is evolving rapidly as new technologies and methodologies emerge. One major trend is the rise of AI-driven data validation. These tools can detect and correct errors with minimal human intervention, enhancing accuracy. Real-time data accuracy monitoring is also gaining traction, allowing organizations to ensure data quality as it enters their systems. Furthermore, the growing emphasis on data democratization means that more teams have access to data, increasing the need for accurate data across the board. Looking ahead, integrating these trends into your data strategy will be crucial for staying competitive.

Don’t Compromise on Data Accuracy. Your Company Needs Data It Can Trust.

Data quality is the goal. Data accuracy is the outcome. With the right human and technological resources, your company will be in a better position to step into the future confidently.

Getting Started with DataMatch Enterprise

Download this guide to find out the vast library of features that DME offers and how you can achieve optimal results and get the most out of your data with DataMatch Enterprise.

Download